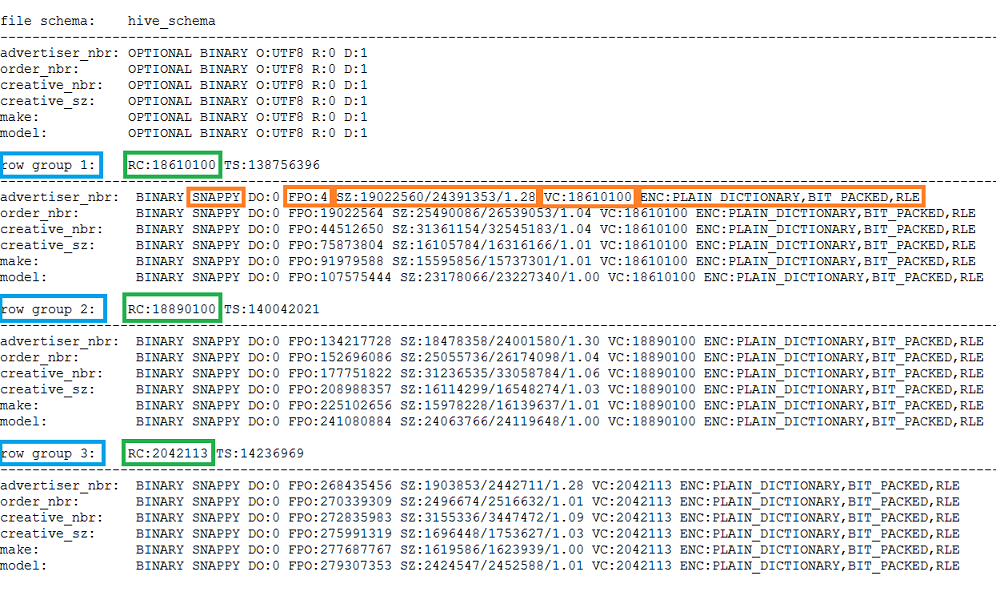

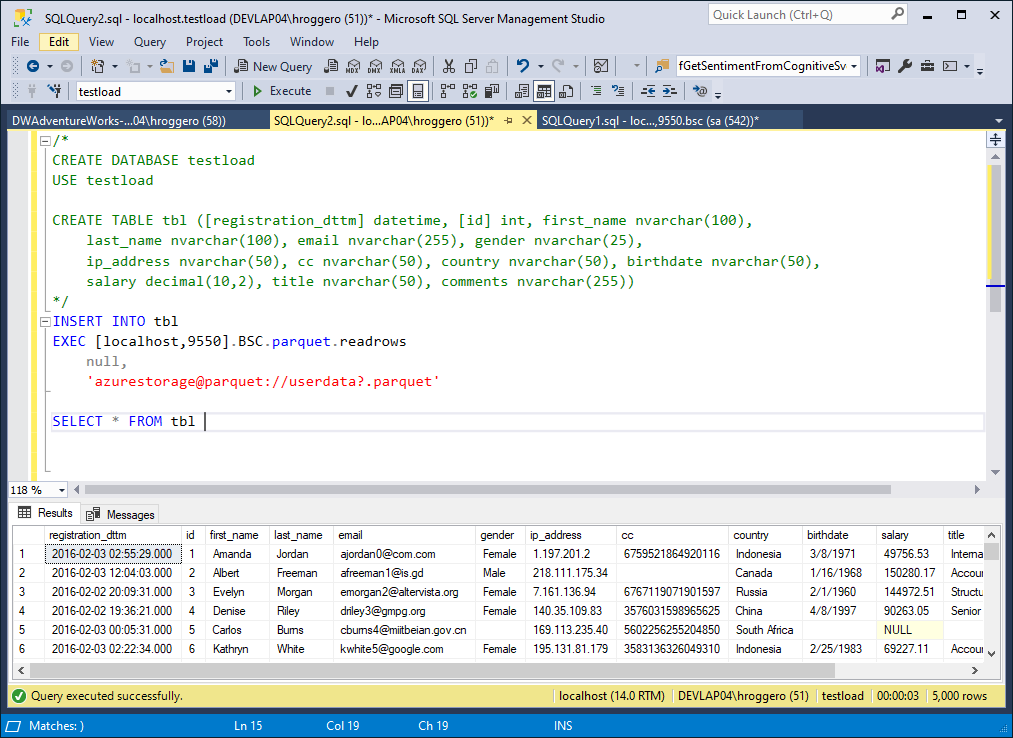

The feature I’m illustrating on this article is in fact a combination of two features: If we google ( verb: To google) about Power BI and Parquet files we can find many work arounds to read Parquet files in Power BI, but no mention to the new Parquet connector released on last November ( ), so I had to write about it. We store in the folder many files with the same structure, each file containing a piece of the data.ĭata Lake tools are prepared to deal with the data on this way and read the files transparently for the user, but Power BI required us to read one specific file, not the folder. When reading from a data lake, each folder is like a table. While writing about querying a data lake using Synapse, I stumbled upon a Power BI feature I didn’t know was there. You should end up at a prompt saying apache drill> with no errors.Data Lakes are becoming more usual every day and the need for tools to query them also increases. You can view Parquet files on Windows / MacOS / Linux by having DBeaver connect to an Apache Drill instance through the JDBC interface of the latter:Ĭhoose the links for "non-Hadoop environments".Ĭlick either on "Find an Apache Mirror" or "Direct File Download", not on "Client Drivers (ODBC/JDBC)"Ĭd in the extracted folder and run Apache Drill in embedded mode: cd apache-drill-1.20.2/ Once loaded your parquets this way, you can interact with the Pyspark API e.g.

(parquetdir '\\' parquet).createOrReplaceTempView(parquet) # the respective table name equal the parquet filename table in our database, spark creates a tempview with # There might be more easy ways to access single parquets, but I had nested dirsĭirpath, dirnames, filenames = next(walk(parquetdir), (None,, )) # Getting all parquet files in a dir as spark contexts. Parquetdir = r'C:\PATH\TO\YOUR\PARQUET\FILES' Once set up, I'm able to interact with parquets through: from os import walk There is very well done guide by Michael Garlanyk to guide one through the installation of the Spark/Python combination. However, I assume that the Zeppelin environment works as well, but did not try that out myself yet. The best results were achieved by using Spark as the SQL engine with Python as interface to Spark. In addition to extensive answer there is one further question I encountered in this context: How can I access the data in a parquet file with SQL?Īs we are still in the Windows context here, I know of not that many ways to do that. The ones I've listed are the only ones I'm aware of as I'm writing this response This is due to Parquet being a very complicated file format (I could not even find a formal definition). But not many exist and they mostly aren't well documented.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed